How AI Is Changing the Visual Content Production Pipeline in E-Commerce

There used to be a clear shape to a visual production cycle. Brief, mood, shoot, retouch, deliver. Every stage had its people, its processes, its timeline, and its budget line. You knew what you were buying and roughly what it would cost. AI has pushed itself into every single stage of it. Quietly, quickly, and in ways that most brands haven't fully mapped yet.

Related read on this topic:

1. How AI is Reshaping Fashion Photography Now

2. Will AI Replace Commercial Photography?

3. AI vs Traditional Product Photography

4. AI Product Photo Editing for eCommerce

Before the Shoot: Where AI Is Becoming Indispensable

Even in pre-production, AI has started to change how creative teams work.

Moodboarding used to take days. A creative director would pull references from a dozen sources, curate them into a coherent visual story, present them to a client, revise based on feedback, and finally arrive at a direction everyone trusted enough to build a shoot around. That process could easily consume a week of senior creative time, and it still might not land right.

AI has compressed that cycle. Tools like Midjourney, Adobe Firefly, and a growing range of purpose-built creative platforms allow teams to generate visual references from text prompts in minutes, iterate on them in real time, and arrive at a shareable moodboard in days. Misalignments that used to surface on shoot day now surface in a Figma file.

But there's a limitation worth naming here, because it matters more than most people admit. AI moodboarding tools are trained on the same enormous corpus of visual content, which means teams working independently, for different brands, in different categories, will often pull from an overlapping aesthetic vocabulary. The references start to rhyme. If a creative team isn't intentional about pushing past the first wave of AI outputs, visual strategies can converge toward a kind of algorithmic median. The tool is powerful, but the critical eye still has to be human.

AI in styling and art direction is following a similar trajectory. For e-commerce brands managing large SKU catalogs, the ability to virtually test set concepts before physically building them has a direct impact on shoot efficiency and on-set costs. Fewer surprises at call time means fewer expensive decisions made under pressure.

But human art directors remain essential in the layer of brand-specific judgment that AI simply doesn't have access to. The cultural nuance in a casting decision. The instinct that a particular shot concept has been done to death in this category, even if it generates beautifully. The understanding that what this brand needs right now isn't the technically correct answer, but the creative one. AI can show you a hundred competent directions, but it can't yet tell you which one is right.

Lighting simulation and production set design pre-visualization round out the pre-production picture. Capabilities to preview angle options and simulate how a set layout will photograph are reducing the expensive improvisation that used to happen on set. Less improvisation means tighter shoot days, and tighter shoot days mean lower production costs.

What E-Commerce Is Actually Doing With AI-Generated Visual Content

Strip away the hype and the think-pieces and look at where e-commerce brands are actually deploying AI-generated visual content right now, and a practical pattern emerges. It's not where most of the coverage focuses.

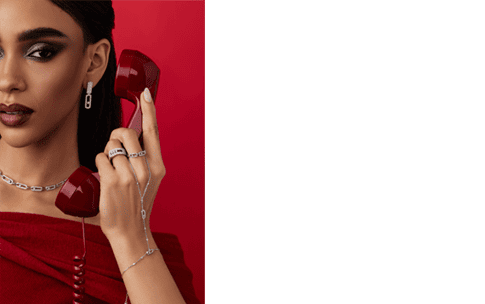

Instead of organizing a full production, you can take real product photography and generate the entire creative direction on top: models, environments, lighting, mood. The context becomes flexible and scalable without the need to physically produce it.

Whether in a studio or on location, productions with models are expensive, time-consuming, and operationally heavy. You’re dealing with casting, locations, styling, lighting, crew coordination and paying everyone involved. Even a straightforward shoot quickly turns into a complex and costly process. At the same time, you often already have what matters most: a strong, clean product shot.

Localization is the least-discussed use case that deserves more attention. A global e-commerce brand running campaigns across twelve markets used to face a difficult choice: produce market-specific imagery at high cost, or run the same visual everywhere and accept the disconnect. AI-generated scene adaptation, like changing the ambient visual language of an image to feel local, is changing that calculus.

A/B variant production has been similarly transformed. The traditional economics of creative testing, where producing eight versions of a hero image costs eight times as much as producing one, meant most brands tested less than they should have. AI-assisted production brings the marginal cost of a variant much lower. Brands are running more creative experiments, learning faster, and making better allocation decisions as a result.

Where brands are being more careful is on hero product imagery, the images that carry the most commercial weight and sit closest to the purchase decision. AI still has meaningful limitations here. Physical accuracy is inconsistent: reflections, material textures, the precise way a hem falls or a surface catches light. These are exactly the details that matter most in a product shot, and exactly the details where current generative models are least reliable.

There's also a platform compliance layer that brands operating at scale need to take seriously. Major e-commerce platforms are actively developing policies around AI-generated imagery, like disclosure requirements or even restrictions on certain categories. The policy landscape is moving, and brands that have embedded AI-generated content deeply into their production workflows without tracking this are taking on quite a compliance risk.

The honest framing is this: AI-generated content is filling a content gap that studios were never economically viable to fill in the first place. The volume of visual content a modern e-commerce operation needs: for email, for social, for paid, for seasonal refreshes, for international markets, has always outpaced what traditional production could sustain. AI is addressing that gap. What it isn't doing, and shouldn't be positioned as doing, is replacing the high-investment imagery that actually builds brand equity.

Does AI Work in Retouching?

High-end and professional product retouching is not primarily a technical process, it's a decision process. A skilled retoucher isn't just correcting problems, they're making judgment calls about what serves the image. What to leave. What to adjust. When a shadow is doing structural work and shouldn't be touched. When a jewelry texture that technically reads as an imperfection is actually part of what makes a product real. AI doesn't have taste or thinking, it has patterns, and it applies those patterns consistently, which is a different thing entirely from applying them wisely.

Leather with genuine grain and wear, the particular sheen of heavy silk, the way raw denim loses detail at the fold, brushed metal that needs to feel cold – these are the material qualities that make products feel real in a photograph, and they are exactly what current AI retouching tools tend to flatten.

Brand consistency at a nuanced level is another area of real concern. AI retouching tools do not know your brand's specific visual language, your preferred skin tone treatment, your established approach to shadow and highlight. Applied at scale without careful oversight, inconsistencies accumulate in ways that are hard to catch in a standard QA pass and that gradually erode the coherence of a brand's visual identity.

Finally, AI retouching tools have a tendency toward over-processing that can make imagery feel synthetic even when it wasn't generated. For brands where authenticity and credibility are central to positioning, this is a genuine risk that deserves more attention than it typically receives.

AI in Commercial Photography File Management

A mid-size e-commerce brand running seasonal campaigns across multiple categories can generate between 5,000 and 20,000 image assets per season. Each of those assets needs to be named, tagged, routed to the right channels, sized to the right specs, and made findable by people who weren't in the room when they were created. The manual overhead of that process is enormous, and it's overhead that has historically consumed the time of skilled people who should be doing something more valuable.

AI can handle meaningful portions of this work. Auto-tagging by product category, color, shot type, and content attributes. The time math is real. Operations and production management teams report recovering hours per week through AI-assisted asset workflows. That time compounds. Faster asset retrieval means faster QA cycles. Faster QA cycles mean faster publishing. And faster publishing, in an environment where content calendars are relentless, and windows for trend-relevant content are short, has direct commercial value.

The Visual Production Has Changed. Has Your Thinking Caught Up?

In pre-production, AI accelerates the creative development process and reduces the cost of creative exploration, but the quality of what comes out is still bound by the quality of the thinking that goes in.

In content generation, AI fills volume and variation gaps that traditional production was never equipped to fill economically, but it hasn't worked as the product imagery that actually does transactions.

In file management, AI is delivering genuine operational efficiency with relatively low implementation risk. In retouching, AI handles nuance and judgment poorly.

The risk for brands right now is adopting it without a clear understanding of which part of the pipeline it genuinely belongs in and letting the efficiency gains in the places where it works blind you to the quality erosion in the places where it doesn't.

At LenFlash, this is the conversation we're having with clients every week. The answers look different depending on the brand, the category, and the content type. But the question is always the same: where is it actually serving your work and where is it quietly working against it?